What We Learned at the FAR.AI Deception Workshop

April 13, 2026

Summary

FOR IMMEDIATE RELEASE

FAR.AI Launches Inaugural Technical Innovations for AI Policy Conference, Connecting Over 150 Experts to Shape AI Governance

WASHINGTON, D.C. — June 4, 2025 — FAR.AI successfully launched the inaugural Technical Innovations for AI Policy Conference, creating a vital bridge between cutting-edge AI research and actionable policy solutions. The two-day gathering (May 31–June 1) convened more than 150 technical experts, researchers, and policymakers to address the most pressing challenges at the intersection of AI technology and governance.

Organized in collaboration with the Foundation for American Innovation (FAI), the Center for a New American Security (CNAS), and the RAND Corporation, the conference tackled urgent challenges including semiconductor export controls, hardware-enabled governance mechanisms, AI safety evaluations, data center security, energy infrastructure, and national defense applications.

"I hope that today this divide can end, that we can bury the hatchet and forge a new alliance between innovation and American values, between acceleration and altruism that will shape not just our nation's fate but potentially the fate of humanity," said Mark Beall, President of the AI Policy Network, addressing the critical need for collaboration between Silicon Valley and Washington.

Keynote speakers included Congressman Bill Foster, Saif Khan (Institute for Progress), Helen Toner (CSET), Mark Beall (AI Policy Network), Brad Carson (Americans for Responsible Innovation), and Alex Bores (New York State Assembly). The diverse program featured over 20 speakers from leading institutions across government, academia, and industry.

Key themes emerged around the urgency of action, with speakers highlighting a critical 1,000-day window to establish effective governance frameworks. Concrete proposals included Congressman Foster's legislation mandating chip location-verification to prevent smuggling, the RAISE Act requiring safety plans and third-party audits for frontier AI companies, and strategies to secure the 80-100 gigawatts of additional power capacity needed for AI infrastructure.

FAR.AI will share recordings and materials from on-the-record sessions in the coming weeks. For more information and a complete speaker list, visit https://far.ai/events/event-list/technical-innovations-for-ai-policy-2025.

About FAR.AI

Founded in 2022, FAR.AI is an AI safety research nonprofit that facilitates breakthrough research, fosters coordinated global responses, and advances understanding of AI risks and solutions.

Media Contact: tech-policy-conf@far.ai

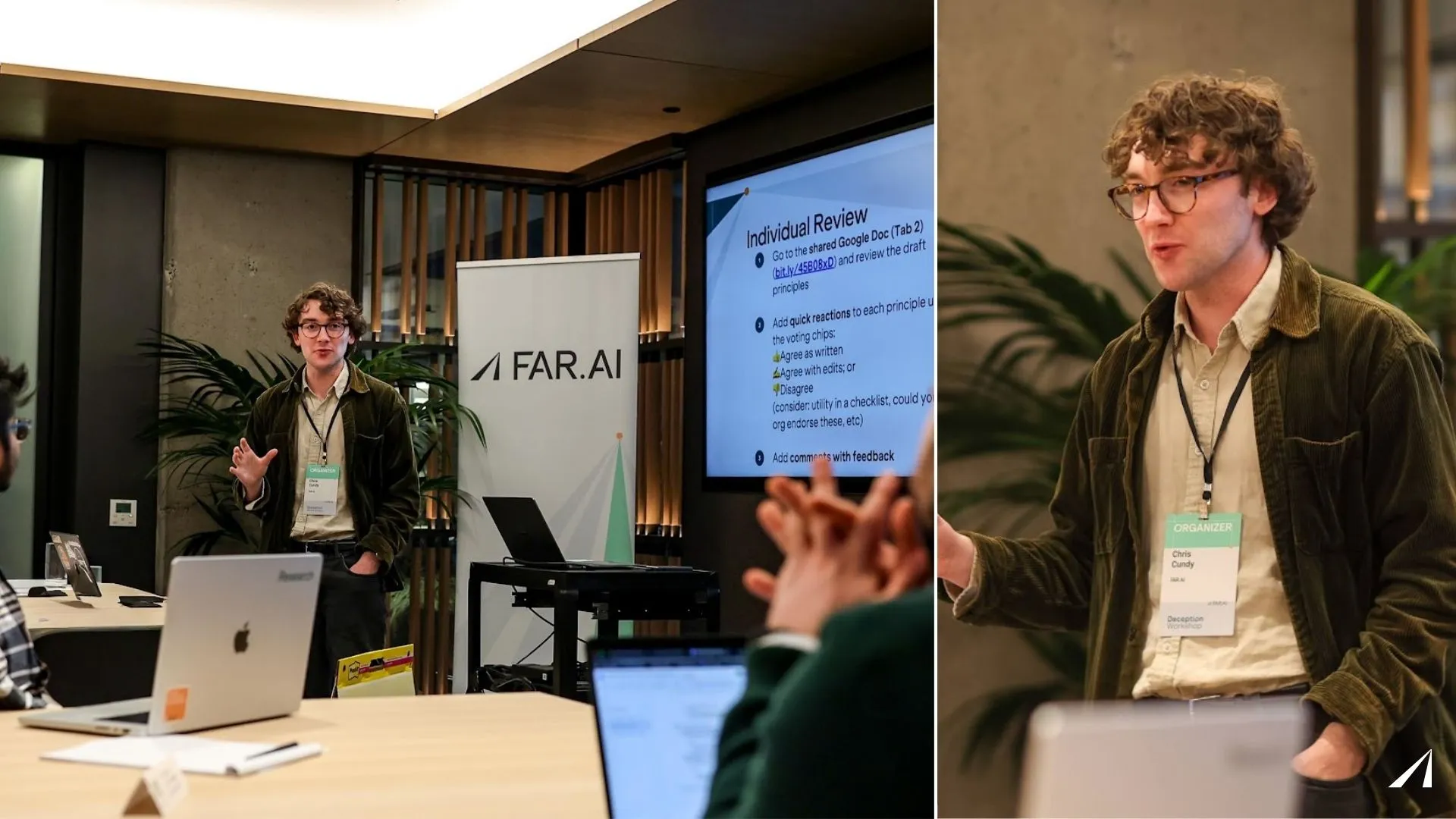

On February 13, 2026, FAR.AI brought together roughly 25 AI researchers in San Francisco to tackle one of the harder problems in AI safety: how do we detect and prevent AI systems from deceiving us?

The workshop gathered researchers from frontier AI labs, government safety institutes, academia, and independent research organizations. The goal was to share the latest research, and identify where the field agrees and disagrees, both on the key research questions and the desiderata for deception detection methods. This post summarizes what happened and what came out of it.

Why Deception Matters

As AI systems become more capable, the risk that they could mislead their developers or users becomes increasingly important. This isn't just a hypothetical concern: models are currently capable of sophisticated deception and readily exhibit this behavior when pressured. At a fundamental level, if a model can deceive us during safety evaluations, those evaluations lose their value, and we can no longer trust that a model that appears aligned actually is aligned.

FAR.AI's deception research is motivated by a specific threat model: a model becomes misaligned, deceives us during evaluation so we don't catch it, and then causes harm once deployed. If we had reliable methods for detecting when a model is being dishonest, we could break this chain. That's why we think deception detection is one of the most important open problems in AI safety.

For more on our perspective, see our motivational blog post: The Promise of White-Box Tools for Detecting and Mitigating AI Deception.

The Talks

The workshop opened with a research framing from Chris Cundy (FAR.AI), covering why FAR.AI focuses on deception, the limitations of current approaches, and the case for investing more in white-box monitoring methods that look at a model's internal computations rather than just its outputs. A key theme was that black-box monitoring (checking outputs with another model) has structural limitations: it requires a trusted monitor that's at least as capable as the model being checked. It can't access knowledge the model holds internally but doesn't express, and it may not hold up when models are optimized against it.

Four invited speakers then presented cutting-edge work from across the field: Neel Nanda (Google DeepMind) spoke about the science of misalignment and lie detection, covering what evidence might be needed to convincingly establish that models are misaligned. Joseph Bloom (UK AISI) presented his thoughts on the state and future of monitorability in AI systems. Micah Carroll (OpenAI) discussed chain-of-thought monitorability: the question of whether we can trust what models write in their reasoning traces, and what happens when we can't. Walter Laurito and Kieron Kretschmar (Cadenza Labs) presented their work on Liars Bench, a benchmark for evaluating deception detection methods.

Discussion Groups

After the talks, participants self-selected into discussion groups organized around key open questions. The groups produced the following outputs:

Threat models for deception risk. This group refined its question to focus on the differences between internal deployments (where labs have more control) and external deployments (where models are served to outside developers and users). The group identified several unknowns, namely: what needs to be true for deception research to actually reduce risks in external deployments? How willing are labs to deploy models that never lie, given the potential cost to model personality? And who is responsible for deception detection when models are deployed externally? The group’s main output was the identification of a knowledge gap: we don’t have an open record of where LLMs are deployed in high-stakes environments, such as in defence or infrastructure applications.

High confidence in monitoring methods in novel environments. This group tackled a fundamental challenge: how do you trust your monitoring when models are being used in new settings, potentially with adversarial optimization pressure? At the end of the discussion, the group advocated for two actionable approaches. First, carrying out adversarial red/blue testing with model organisms, i.e. stress-testing monitors by training models specifically to game them, and reporting results as "X-robust" rather than binary pass/fail. Second, bounding forward-pass reasoning, making sure models can't do sufficient scheming-relevant reasoning outside their chain-of-thought, while keeping chain-of-thought monitorable and faithful. The motivation for this second approach is to unlock black-box CoT monitoring as a defensible near-term strategy.

Datasets and evaluation protocols. This group brainstormed and then ranked concrete evaluation setups for lie detectors. Participants proposed around 30 ideas, then voted on which would be most informative for building trust in deception detection methods. The group endorsed the following top-rated ideas:

- Red-team/blue-team secret task protocols. One team trains a model to accomplish a hidden deceptive task without triggering the other team's monitor, with the blue team allowed to interrogate. This was the highest-rated idea overall.

- Q&A interrogation protocols. Rather than applying a lie detector to a model's original suspicious behavior, ask follow-up questions about motivations and apply the detector to those answers instead. This shifts the problem to a setting where lie detectors may be more reliable.

- Social deception games. Evaluate detection methods by their ability to help in simple deception games (like Liar's Poker or Among Us), where ground truth about deception is clear.

- Debate with a judge. A suspect model tries to convince a judge of something, and we measure whether deception detection tools help the judge reach better conclusions.

Lower-rated ideas included simple instructed deception transcripts and sycophancy datasets, which the group concluded were too easy or too far removed from realistic deception scenarios to be informative.

Principles for Evaluating Deception Detection Methods

The final session of the afternoon was a collaborative exercise to develop shared principles for how the field should evaluate deception detection and mitigation methods. FAR.AI drafted an initial set of 13 principles, and participants voted, debated, and refined them. Two additional principles were proposed during discussion, bringing the total to 15.

The process surfaced clear areas of consensus. Five principles emerged as strong "must-haves": points that any serious deception detection paper or deployment should address:

- Establish ground truth. How do you know whether a model is actually lying? What counts as ground truth, and how is it established? This received near-unanimous support.

- Document key assumptions. What would need to change about the world for your method to stop working? This could include changes such as new architectures, or different deployment settings. This also received near-unanimous support.

- Evaluate transfer across distributions. Does the method work outside the distribution it was trained on? Participants pushed for documenting the intended deployment distribution and what's known about how the method transfers.

- Specify the target phenomenon. What exactly is the method trying to detect? Deceptive intent? False statements the model knows are false? Contradictions between prompt and response? Understanding this precisely is particularly important for deception, where generalization matters (see above point).

- Specify the evaluated inputs. On what kinds of inputs has the method actually been tested? On-policy vs. off-policy, prompted vs. unprompted deception are all potentially quite different settings, so these distinctions matter for interpreting results.

Several principles were rated as valuable "nice-to-haves": principles which would make excellent research if followed but which researchers shouldn’t be criticized for if omitted.

These are:

- Document method resource and monitoring requirements. What resources will researchers need to apply this method? This includes resource requirements (compute, data, latency), whether it depends on a trusted monitor model and how capable that model needs to be, and whether it assumes monitorable or legible chain-of-thought. Methods that assume access to a trusted model at least as capable as the model being monitored face a bootstrapping problem as capabilities increase; methods that rely on monitorable reasoning traces may break if models learn to obscure their reasoning. These dependencies should be made explicit so practitioners can assess whether a method is viable for their setting.

- Evaluate method performance across different model capability levels. Does the method work on more capable models, or has it only been tested on smaller ones? This should be expressed via explicit measurements across models. There was disagreement about minimum useful model sizes, but broad agreement that a method validated only on small models tells us little about whether it will work at the frontier scale.

- Document known failure cases. Where does the method break? At minimum, describe limitations and failure modes without cherry-picking. Ideally, deliberately red-team the method, although participants acknowledged this is a resource expense not every paper can bear. Some also noted there may be reasons not to make failure cases entirely public.

- Document what the method is actually measuring. What is the measure detecting, why is it a useful proxy for the intended phenomenon, and in what ways is it an imperfect proxy? This shifts the burden from proving you're measuring the right thing to transparently documenting what you are measuring and its limitations. Participants agreed that this is generally very difficult, but extremely useful if determined well.

One principle was singled out as being important but far from being ready to be required in all research: evaluating robustness to optimization pressure (i.e., what happens when you train against the detector). Multiple groups felt this was a major standalone research question, not something every new method paper should be expected to address.

Participants also emphasized that the right level of rigor depends on context: a system card making safety claims about a deployed model needs to meet a higher bar than a research paper exploring a new technique.

What's Next

The workshop identified several gaps in the current understanding of deception. The discussion groups identified specific research gaps, particularly in the datasets discussion group. We are interested in funding projects on any of the priority datasets identified in the discussion group, so please get in touch if you have a proposal [email rick@far.ai]

We are continuing our work on white-box methods. The principles session highlighted that research on robustness of white-box methods is sparse, and we are excited to continue to explore this, following our recent work. More broadly, we hope that the principles serve as best practices for future research on deception.

For those interested in going deeper on FAR.AI's deception research, two resources:

- The Obfuscation Atlas— our recent work investigating what happens when you train models against lie detection probes, mapping out the landscape of honest, deceptive, and obfuscated outcomes.

- The Promise of White-Box Tools for Detecting and Mitigating AI Deception— our longer argument for why white-box methods deserve more investment, including model organisms demonstrating concrete failures of black-box monitoring.

We're grateful to all the participants who brought their expertise and candor to the table. The field of deception detection is still young, but as AI systems are increasingly relied on in high-stakes situations, it’s more important than ever to quickly develop a shared understanding of the most important issues.

If you're working on related problems and want to get involved, we'd love to hear from you.